A 2025 paper from the University of Granada published in Frontiers in AI framed this as the Model Variability Problem, describing inherent uncertainty and variability in LLMs as arising from stochastic inference mechanisms, prompt sensitivity, and biases in training data, all of which affect output consistency in ways users often do not see.

Here is the shift in thinking that changes how you build.

That is not a workaround. That is a quality signal. Disagreement earns human attention. Agreement earns confidence. The system becomes self-regulating rather than requiring constant manual oversight.

This is not a product pitch. It is an architectural illustration. The value here is not any individual model in the pool. It is the structural property of the system: outputs that most models agree on are more likely to be correct than outputs that come from one model in isolation.

Contrarian View: Challenging the best-model myth

When multiple AI models are given the same source text and asked to translate it, they frequently produce different outputs. Not wrong versus right in a simple sense, but different choices around register, terminology, idiom, and phrasing. Legal text in particular generates significant divergence because small wording differences can change meaning materially. A clause that says “shall” versus “may” is not a stylistic preference; it defines obligation.

The assumption behind model selection is that there is a stable ranking of quality. Pick the top performer, and you get top-quality output. Everything else follows from that decision.

That finding has a practical implication that rarely surfaces in vendor comparisons: if two well-regarded models regularly disagree on borderline cases, then any system built on just one of them is inheriting that model’s specific blind spots without knowing it. The benchmark score hides the gap.

The Tomedes Translation QA Tool provides a structured quality audit by comparing source and target text side by side, flagging missing content, inconsistent terminology, and linguistic errors, and generating a scored breakdown across accuracy, fluency, style, and terminology. That kind of objective output is particularly useful when an organization needs to demonstrate translation quality to a compliance team or a client, rather than simply asserting it.

The same logic applies at the model level. The durable advantage is not the choice of which model to trust. It is the design of a system that does not require any one model to be right.

Where disagreement becomes most visible

Different task types expose model variance in different ways. For some applications, outputs are evaluated subjectively and variance stays hidden. For others, the disagreement surfaces quickly because the domain has clear standards.

The problem is that framing the question that way may be missing the point entirely.

It is a reasonable question on the surface. Procurement teams want consistency. IT leaders want to reduce vendor sprawl. And everyone wants to feel that they made the optimal choice. So the conversation becomes a comparison: GPT-4o versus Gemini versus Claude. Benchmarks are cited. Leaderboards are consulted. A winner is picked.

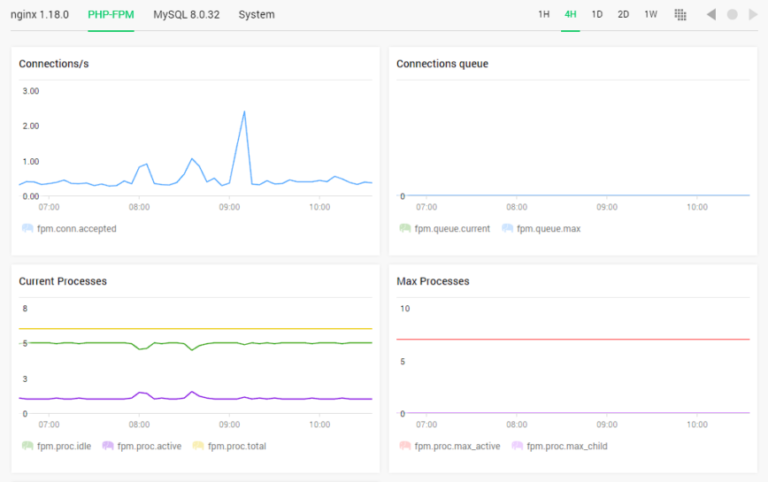

The practical version of this approach is already being deployed. MachineTranslation.com compares the outputs of 22 AI models and selects the translation that most of them agree on. Instead of trusting one AI to produce the right output, the system uses convergence across a large model set as the quality gate.

The broader trajectory here is visible across enterprise AI adoption. As CloudTweaks has covered in its analysis of AI’s role in enterprise integration, the most effective deployments are moving toward AI orchestrators that coordinate multiple AI models for end-to-end optimization, rather than relying on a single embedded model to handle everything.

Disagreement as a signal, not a failure

The common thread is a shift in where AI value is created. For the first year or two of practical LLM deployment, competitive advantage came from adopting models faster than competitors. That window is closing. The models themselves are widely available. The differentiator is now architecture: how outputs from those models are structured, verified, compared, and acted upon.

But research published at SIGIR 2025 complicates that picture. A study examining how different LLMs classify the same documents found that model disagreement is systematic, not random, with disagreement cases exhibiting consistent lexical patterns and producing divergent top-ranked outputs even under shared scoring functions. These models were not making random errors. They were making different errors, shaped by their training histories, in predictable and structurally distinct ways.

The real challenge in deploying AI for consequential tasks is not selecting the best single model. It is building systems that do not depend on any one model being right.

This is a fundamentally different architecture than running a single model and hoping.

This pattern appears across verticals. In healthcare, multi-model orchestration is being used to preserve diagnostic disagreement as structured information rather than collapsing it into a single output. In financial services, disagreement across models is being tested as a signal of economic uncertainty. In localization and content operations, consensus architectures are replacing single-model pipelines for high-volume, high-stakes translation.

What systems built on agreement look like in practice

The gap reveals something important: a model that performs strongly on reasoning tasks may still produce unreliable output on domain-specific, terminology-heavy content. Task type matters more than headline model quality.

Most enterprise teams encounter this only when something goes wrong. A contract is reviewed and a term has shifted. A product description goes live with a meaning the marketing team did not intend. A support article confuses rather than clarifies. The instinct is to blame the model. The more useful diagnosis is that a single model was asked to carry full accountability for a task where even top models disagree.

Internal benchmarks from MachineTranslation.com, which runs translation tasks across 22 AI models simultaneously, show that individual top-tier LLMs hallucinate or fabricate content during translation between 10% and 18% of the time, based on data synthesized from Intento’s State of Translation Automation 2025 and WMT24 benchmarks. That range is wide. It also spans models that score well on general capability evaluations.

For translation workflows specifically, there are tools designed to surface exactly the kinds of errors that accumulate when AI operates without external checking.

The underlying point is that AI output quality is not self-certifying. Building an audit layer into the workflow, whether through multi-model comparison, structured QA tooling, or human review for high-stakes content, converts AI reliability from an assumption into a demonstrated property.

The QA layer: making model outputs auditable

Translation is one of the clearest cases.

CloudTweaks has covered a related dynamic in its analysis of enterprise AI breakthroughs, noting that organizations with the strongest AI deployments in 2026 are those treating AI as a strategic capability rather than a single tool, investing in governance and adaptable architectures rather than picking a platform and locking in.

Picking the best model is a 2023 question. Building systems that use models well is the question that matters now.

One operational dimension that often gets overlooked is how you verify outputs once they leave a model. This matters regardless of whether you are using one model or twenty.

Where AI infrastructure is heading

There is a question that comes up constantly in enterprise AI discussions: which model should we standardize on?

The standard response to model disagreement is to try to eliminate it. Pick a better model, tune the prompt, add guardrails. Treat disagreement as a bug in the system.

A more productive interpretation is to treat disagreement as information. When models diverge on the same input, that divergence tells you something true: the task is genuinely ambiguous, the content is at an edge case, or the output needs closer scrutiny. As Stanford University’s guidance on AI validation frameworks puts it, the recommended approach is to develop a framework where if all models agree on an output, it is deemed reliable, and if there is disagreement, further intervention or human review may be required.

The results, documented in internal benchmarks, show that this approach reduces critical translation errors to under 2%, compared to the 10-18% hallucination rate typical of individual models on the same tasks. For multi-document workflows, SMART-verified translations maintain consistent terminology at a rate exceeding 96%, against an industry baseline of around 78% for single-model outputs at equivalent volume.