If you spend any time in Linux forums, you’ve seen DistroWatch’s Page Hit Ranking cited as proof one distro is “more popular” than another. It isn’t. The DistroWatch Page Hit Ranking (PHR) is one of the most misunderstood numbers in the Linux world, and treating it as a popularity contest will mislead you every time.

Here’s what it actually is, how it works, and other ways you can track Linux distro popularity.

In This Article

How the Page Hit Ranking Works

Every time someone visits a distribution’s page on DistroWatch.com, that counts as one hit toward that distro’s ranking. The site tallies these hits over several time windows: last 7 days, 1 month, 3 months, 6 months, and 12 months. You switch between these timeframes using the dropdown on the homepage rankings sidebar.

To cut down on inflated numbers, DistroWatch counts a maximum of one hit per IP address per day. Visit the Fedora page ten times from the same connection and only the first visit counts. The site also filters obvious bot traffic.

That’s the whole algorithm. It’s a tally of curiosity clicks on each of the distro pages, nothing more.

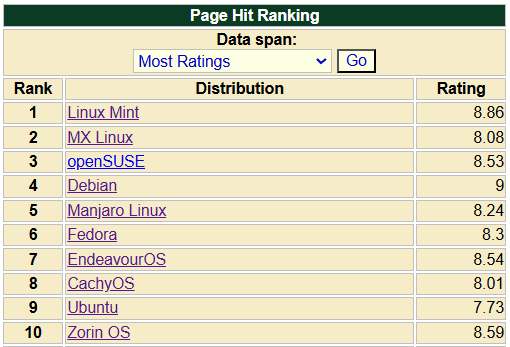

In the screenshot above, CachyOS sits at the top, followed by Mint and MX Linux, with Debian, Fedora, and Ubuntu further down. The HPD column on the right is Hits-Per-Day averaged across the selected timeframe. The small red and green arrows next to each number show whether a distro is trending up or down compared to the previous period. Pull this view a year from now and the order will look different, that’s the point of the metric.

What DistroWatch Itself Says

DistroWatch is upfront about this. On its official Page Hit Ranking page, the site states the rankings “correlate neither to usage nor to quality, and should not be used to measure the market share of distributions. They simply show the number of times a distribution page on DistroWatch.com was accessed each day, nothing more.”

The site’s editor calls it “a light-hearted way of measuring popularity.” That language matters. It’s a pulse-check on what visitors are clicking, not a census of installed systems.

Why the Ranking Misleads People

A few specific quirks turn the Page Hit Ranking into a poor proxy for actual usage:

News and releases drive hits. When a distro pushes out a new version, DistroWatch publishes the announcement on its homepage. Readers click through out of curiosity, and that distro’s hit count climbs. Rolling release projects that ship constant updates get a structural advantage over distros that release once or twice a year.

Hacker News and Reddit spikes register as “popularity.” A trending thread, a controversial blog post, or a major review can send thousands of curious visitors to a DistroWatch page in a day. None of those visitors necessarily install the distro. Some are just rubbernecking.

Community enthusiasm gets counted as user base. MX Linux famously held the #1 spot for years, partly because its community is unusually engaged with DistroWatch itself. That doesn’t mean MX Linux has more installed users than Ubuntu, Debian, or Fedora. It means MX users visit the page often.

Distros with weak SEO benefit. If you Google a distribution name and the project’s own site ranks poorly, the DistroWatch page often shows up first instead. Every one of those clicks counts toward the PHR while telling you nothing about who actually runs the OS.

Curated coverage. A distro has to be in DistroWatch’s database to be ranked at all. The editors decide which projects get listed, so some niche or new distros never appear. CentOS sitting unusually low has been pointed out for years, despite its heavy use in server environments and training labs.

Beyond Hit Counts: The Ratings Views

The Page Hit Ranking isn’t the only ranking DistroWatch offers. The same dropdown that switches between time windows also lets you sort by user ratings. These are slightly more useful than raw clicks, because they reflect what people who registered an opinion actually thought of the distro.

The “Most Ratings” view sorts by how many reviews each distro has received. This is closer to a measure of mindshare among engaged users, since you have to register and write a review for it to count.

Linux Mint, MX Linux, and openSUSE lead this view, with mainstream names like Debian, Fedora, and Ubuntu also high on the list. The order looks more like what you’d expect if you asked “which distros do enthusiasts actually have opinions about?” That’s still not market share, but it’s a better signal than page hits.

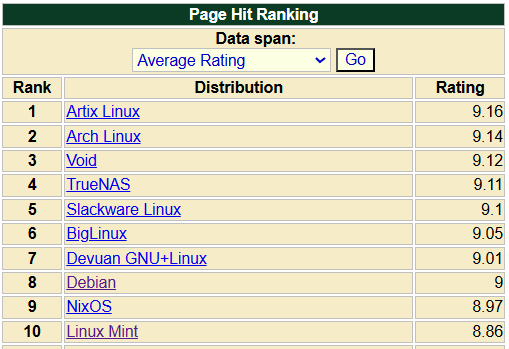

The “Average Rating” view sorts by mean score across all reviews. This one tells a different story.

The top of this list is dominated by enthusiast distros: Artix, Arch, Void, Slackware, Devuan. None of these are beginner-friendly. The pattern reflects who tends to leave reviews. People who choose Arch over Ubuntu are more likely to be invested enough in their distro choice to rate it highly. A 9.16 average for Artix tells you its small user base loves it. It doesn’t tell you Artix has more users than Ubuntu, which sits well down the list.

Both rating views are worth a look, but they suffer from the same selection bias: only motivated users leave ratings, and motivated users skew toward niche or technical distros. Useful context, not a popularity contest.

What to Use in Addition to DistroWatch

There’s no perfect public metric for Linux distribution market share. Most distros don’t phone home, which is part of why people choose them in the first place. But a few signals get you closer than the Page Hit Rankings:

Project download and mirror statistics. Some projects publish download counts or aggregate mirror traffic. Fedora and openSUSE have historically shared installation numbers from update servers, which at least reflects systems that ran an update. They still undercount offline installs and overcount distro-hoppers, but they’re tied to real systems.

Forum and bug tracker activity. Active issue trackers, healthy support forums, and large subreddits suggest a real user base behind a distro. Compare the size of the Linux Mint forum or the Manjaro forum against a distro that ranks similarly on DistroWatch but has a near-empty community. The gap tells you something the PHR can’t.

Package repository mirror logs. Operators of major mirrors occasionally publish anonymized logs showing which repos pull the most traffic. These are noisy but reflect machines that regularly check for updates.

Server-side User-Agent stats from large Linux-focused sites. If you run a sysadmin blog or a developer tooling site, your own analytics will show which distros your readers actually browse from. That’s a small sample, but it’s real systems making real requests. In my case, analytics show Ubuntu > Debian > Arch > Fedora.

None of these are clean. But together, they give a better signal than a page-hit tally on one website.

When the PHR Is Actually Useful

The Page Hit Ranking isn’t worthless! Read for what it is, it tells you something real:

It shows what the Linux-curious are looking at right now. A spike in the 7-day ranking is a reasonable signal that a distro just released, got covered, or is having a moment. Trending data is genuinely useful for noticing new projects worth investigating.

It’s also a decent way to discover distros you’ve never heard of. Scrolling the rankings surfaces names you wouldn’t find on the front page of most Linux media. For that purpose, the PHR works great.

The trouble starts when the number gets cited as evidence of market share. It isn’t about market share.

Conclusion

The DistroWatch PHR measures interest in DistroWatch pages. That’s it. Use it to spot trends and find new projects, not to settle arguments about which distro has the most users. For that question, no single source has a clean answer, and anyone claiming otherwise is selling you something. Put simply, tracking Linux installs isn’t an easy feat. Thankfully!