comparison of popular features with visualization

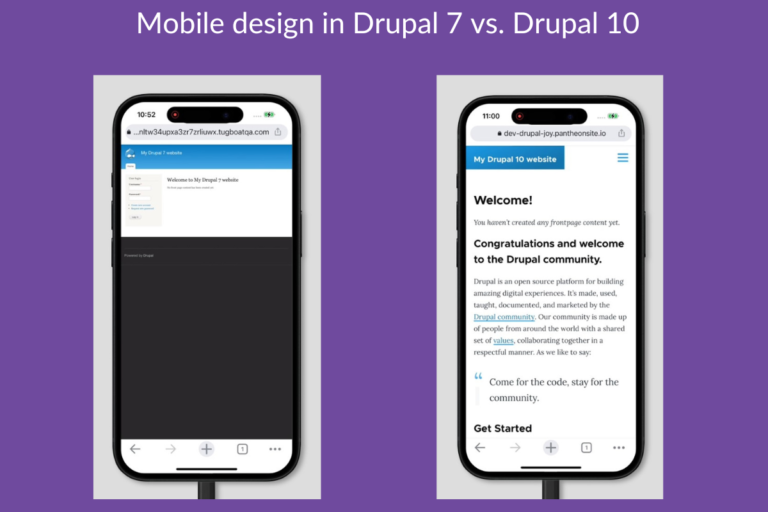

So is it time to upgrade? Less than a year is left before the EOL, so your upgrade planning needs to start today. Considering the huge architectural gap between Drupal 7 and all…

So is it time to upgrade? Less than a year is left before the EOL, so your upgrade planning needs to start today. Considering the huge architectural gap between Drupal 7 and all…

Complying with ISO 27001 means your organization has a systematic and documented approach to managing information risks and protecting valuable data assets. This reassures your customers and stakeholders that their data is properly…

Inspired by user feedback, we decided to make two changes. First, we decided to broaden our focus: not only will we improve the page-building features of Layout Builder, we will also integrate basic…

There are as many rules in public health as there are in other industries. Understanding privacy, security, and avoiding data spills is on the shoulders of that cybersecurity specialist. Building the right people…

Today we are talking about Drupal Single Sign On, The Benefits it brings to the Drupal Community, and A new book called Fog & Fireflies with guest Tim Lehnen. We’ll also cover Drupal.org…

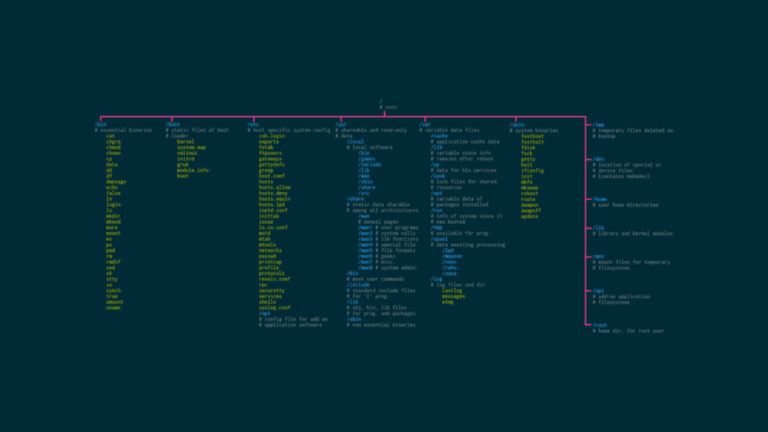

To build experience with Linux commands, there are a few things you can do. First, try to use the command line interface as much as possible. This will give you practice with the…

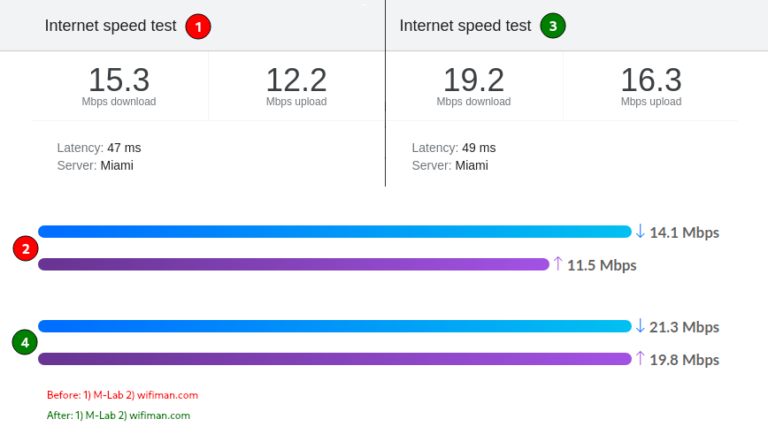

You may have been following my Linux home lab build. One of the most important decisions when building your home lab is selecting the proper router/firewall for your network. After many hours of…

Legacy systems typically operate based on restricting and inflexible processes that are difficult to adapt to modern business needs. Conversely, ITIL principles place a strong emphasis on flexibility and adaptability. Consequently, organisations must…

he remarked. The session saw lively interactions that went beyond mere technical discussions, making it a vibrant platform for networking and community building.”Besides the technical talks, the event also served as a wonderful…